One common misconception among audiophiles goes like this:

1. Whittaker-Nyquist-Kotielnikov-Shannon theorem states that if your sampling rate is 2*X Hz, you can perfectly restore signals of frequencies below X Hz

2. Human hearing limits are (well) below 22050 Hz

3. Ergo: Music can be encoded at 44100 and anything more is pure waste, snake oil, etc.

So how is it in practice? Let’s do a quick check, listen to the following file:

Click

It’s sampled at 8000 Hz and contains a 3999.5 Hz sine wave. Sine wave has a nice property: its sound level is constant. So why what you hear is clearly pulsating? Because it’s wasn’t correctly preserved. Why? Because Nyquist-Shannon theorem doesn’t work here. Why? Because decoder doesn’t use Shannon-Nyquist algorithm to recreate it. And why? Because music rarely consists on infinite repetitions of the same signal, and that’s what Shannon-Nyquist theorem requires to work.

Now before I get to details, a remark: I never studied signalling theory, what I know is general mathematical sense and peeks at Wikipedia. I might get some details wrong. However my understanding proved good enough to predict the presented effect, so I think it’s good enough to do some talk.

[ADDED]

Some people pointed out that the example is bad. They use too advanced language, but what I think they meant is it’s because good filtering in a digital-to-analog converter could fix the pulsation, which negates a major part of my post, but doesn’t change the merit.

I’m learning signal processing basics to understand the issue better.

Thanks for all helpful comments.

[/ADDED]

[ADDED2]

I half-read a book on signal processing and then got a very hard month, got no time for anything. Now I’m returning to life, but over the time lost the will to continue investigating what was wrong. I leave it for now. I think I’ll return to it one day, I sure will be much more careful when talking about related issues and probably one day I’ll again want to learn it enough to go on and do it. But it’s nothing sure, I may leave it forever.

[/ADDED2]

Also, the following assumes you understand signal sampling. If you don’t, take a quick look here.

Let’s look at the signal.

I took a series of close-ups before getting to particular samples, so you have an overview where you are:

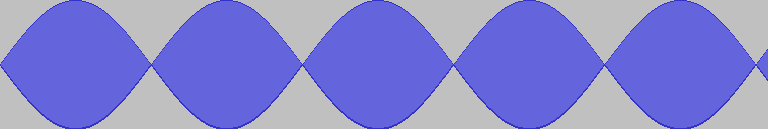

We have samples oscillating with frequency of (exactly!) 4000 Hz. Amplitude starts small but grows up to maximum, then gets back down.

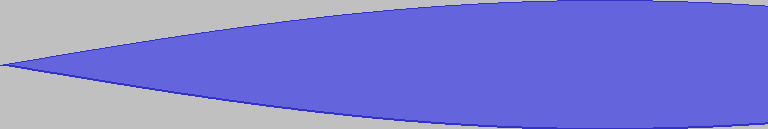

I think the best explanation of why it happened is to draw a sine with frequency X and sampling rate a little over 2*x:

Does it resemble something? Every 2 samples, you land close of where you’ve been previously, but not exactly, the wave goes slower than 2 samples, so you sample it further back. You go further and further back and at some point you’re at the peak. And so on as long as you wish.

Now this is just example of what can happen, purely artificial, obviously. But you can experience different sampling artifacts. And not only with frequencies close to the maximum, with lower ones the problem is just smaller.

To sum up:

No, 44100 Hz is not enough to perfectly preserve audible frequencies. Neither is 96 kHz or more. That’s because so commonly quoted Nyquist-Shannon theorem doesn’t work with finite signals and decoders go the simpler way. And good for them.

There’s also a very important question whether such imperfections matter in practice. Maybe using higher sampling rates indeed doesn’t produce audible improvements?

This is not a point of this post, but I’ll answer it anyway: I don’t know.

UPDATE:

Thanks to bandpass who spotted that the file was actually 44.1 kHz, Audacity exported it badly and I failed to notice. Now I replaced it with a correct file. It doesn’t sound any different though.